Accelerating Heterogeneous Catalyst Discovery: A Comprehensive Guide to Generative AI Models in 2024

This article provides researchers and material scientists with a detailed exploration of generative artificial intelligence (AI) for heterogeneous catalyst discovery.

Accelerating Heterogeneous Catalyst Discovery: A Comprehensive Guide to Generative AI Models in 2024

Abstract

This article provides researchers and material scientists with a detailed exploration of generative artificial intelligence (AI) for heterogeneous catalyst discovery. We cover foundational concepts from basic catalyst chemistry to generative model architectures like VAEs, GANs, and diffusion models. The methodological section details practical workflows for training, conditioning, and integrating AI with high-throughput experimentation and DFT calculations. We address critical troubleshooting steps for data scarcity, model hallucinations, and multi-objective optimization. Finally, we present validation frameworks, benchmark current models (including CatBERTa, ChemGPT, and CatalystGAN), and discuss performance metrics. The conclusion synthesizes the transformative potential and future roadmap for generative AI in accelerating sustainable energy and chemical synthesis.

From Atoms to Algorithms: The Foundational Principles of Generative AI for Catalysis

The discovery of novel heterogeneous catalysts is fundamentally limited by the combinatorial vastness of the design space. This space encompasses multiple, interdependent dimensions, each contributing exponentially to the total number of possible candidates.

Quantitative Breakdown of the Catalyst Design Space

| Design Dimension | Typical Range of Variables | Estimated Combinatorial Possibilities |

|---|---|---|

| Active Metal/Element | Selection from ~40 plausible transition/post-transition metals | 10¹ – 10² per site |

| Composition & Stoichiometry | Binary, ternary, or high-entropy alloys; doping (≤10 at.%) | 10³ – 10⁸ per base system |

| Surface Facet/ Morphology | Major low-index facets (100, 110, 111), high-index, nanoparticles, single-atom | 10¹ – 10² per composition |

| Support Material | Oxide (e.g., Al₂O₃, TiO₂, CeO₂), carbon, zeolite, MXene, etc. | 10¹ – 10² common types |

| Promoter/Dopant Elements | Alkali, alkaline earth, rare earth, other metals (1-3 species) | 10² – 10³ combinations |

| Synthetic Conditions | Temperature, pressure, precursor, time (continuous variables) | Effectively infinite |

| Overall Conservative Estimate | >10¹⁰ candidate materials |

This staggering number (>10 billion) renders exhaustive experimental or computational screening intractable. The challenge is further compounded by the need to simultaneously optimize for multiple target properties: activity (turnover frequency), selectivity towards desired products, stability under reaction conditions (sintering, coking, poisoning resistance), and cost.

Core Experimental Protocol: High-Throughput Synthesis & Testing

To navigate this vast space, integrated high-throughput (HT) workflows are essential.

Protocol 1: Inkjet-Printed Catalyst Library Synthesis

Objective: To synthesize spatially addressable libraries of distinct catalyst compositions on a single substrate. Detailed Methodology:

- Precursor Ink Formulation: Prepare aqueous or organic solutions of metal salts (nitrates, chlorides, acetylacetonates) at precisely controlled concentrations (0.01–0.1 M).

- Library Design & Printing: Use a piezoelectric inkjet printer equipped with multi-cartridge system. A CAD file directs the deposition of picoliter droplets onto a polished, inert substrate (e.g., alumina-coated silicon wafer). Composition gradients are achieved by overprinting different inks at varying ratios.

- Calcination & Activation: The printed wafer is transferred to a programmable muffle furnace. It is heated in air (2°C/min ramp to 500°C, hold for 4h) to decompose salts into oxides, followed by a reduction step (5% H₂/Ar, 400°C, 2h) if metallic phases are required.

- Characterization Mapping: The entire wafer is analyzed using automated scanning techniques:

- X-ray Fluorescence (XRF): For quantitative composition mapping.

- Scanning X-ray Diffraction (SXRD): For phase identification across the library.

- Raman Spectroscopy Mapping: For surface species and support structure.

Protocol 2: Parallelized Reactor Testing (Scanning Mass Spectrometry)

Objective: To evaluate the catalytic performance of each member in a synthesized library under controlled, flowing conditions. Detailed Methodology:

- Reactor Design: A sealed, temperature-controlled chamber with a mass-spectrometer (MS) sampling probe is positioned over the catalyst library wafer.

- Gas Delivery: A calibrated gas mixture (e.g., CO:O₂:H₂:He = 2:1:10:87) flows uniformly over the wafer surface at a total pressure of 1–5 bar.

- Activity Scanning: The MS probe scans predefined positions corresponding to library members. At each point, it measures the consumption of reactants (e.g., m/z=28 for CO) and formation of products (e.g., m/z=44 for CO₂) in real-time.

- Data Processing: Turnover frequencies (TOFs) and selectivities are calculated for each spot from steady-state MS signals, normalized by the active site density estimated from XRF or subsequent chemisorption measurements.

High-Throughput Catalyst Discovery Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Category / Item | Example Product/Chemical | Function in Catalyst Research |

|---|---|---|

| Metal Precursors | Metal nitrates (e.g., Ni(NO₃)₂·6H₂O), Chlorides, Acetylacetonates (e.g., Pt(acac)₂) | Source of active metal components for synthesis via impregnation, co-precipitation, or ink formulation. |

| Support Materials | γ-Al₂O₃ powder, TiO₂ (P25), CeO₂ nanocubes, Zeolite Y, Carbon nanotubes | Provide high surface area, stabilize metal nanoparticles, and can participate in catalytic cycles. |

| Promoters | K₂CO₃, La(NO₃)₃, CsOH | Modify electronic or geometric properties of the active phase to enhance activity, selectivity, or stability. |

| HT Synthesis Substrate | Alumina-coated silicon wafers, Anodized aluminum plates | Inert, flat, conductive substrates for creating spatially resolved catalyst libraries. |

| Calibration Gas Mixtures | 5% H₂/Ar, 10% CO/He, Certified reaction mixtures (e.g., CO:O₂:H₂:He) | Used for catalyst activation (reduction) and as precisely known feeds for performance testing. |

| Characterization Standards | NIST XRD reference standards, BET reference materials | Calibrate instruments (XRD, surface area analyzers) for accurate, reproducible data. |

| Mass Spectrometer Calibrant | Perfluorotributylamine (PFTBA) | Provides known m/z fragments for daily tuning and calibration of the MS detector in testing rigs. |

The Role of Generative Models in Navigating the Space

Generative models address the search challenge by learning the underlying, high-dimensional probability distribution of promising catalysts from existing data and proposing novel candidates within that constrained space.

Logical Framework for Generative Catalyst Design

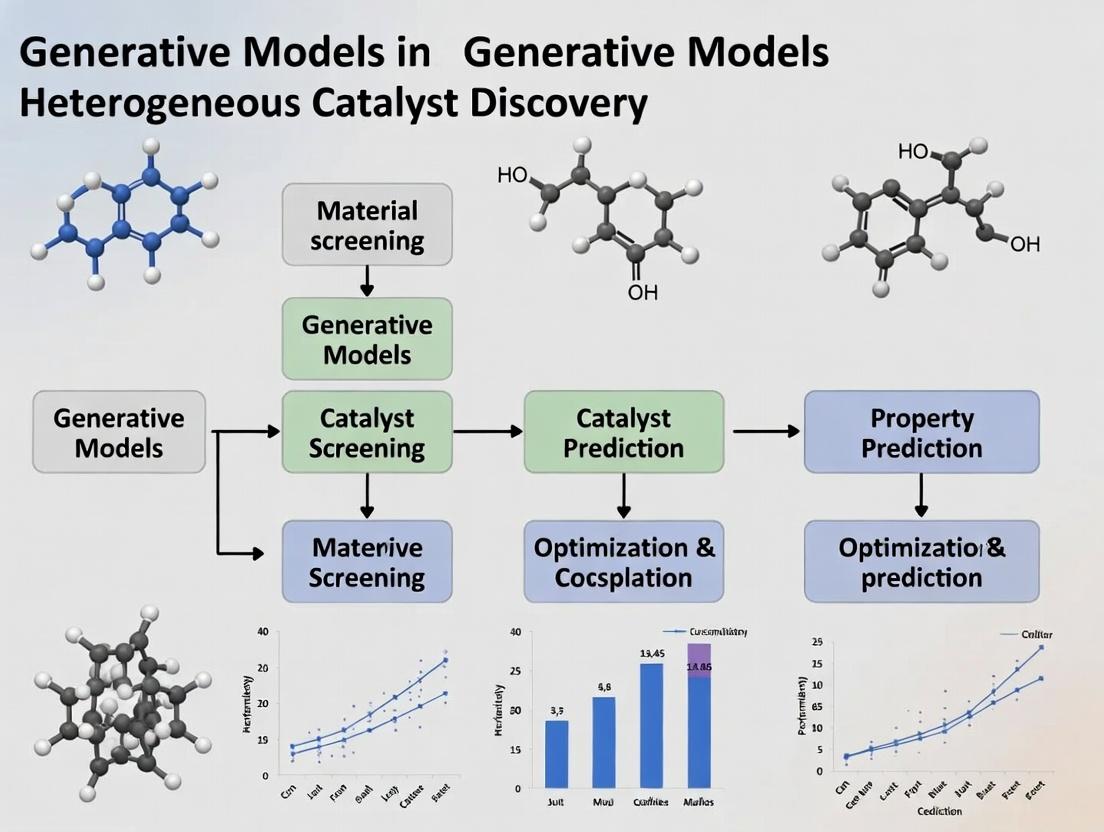

Generative Model Pipeline for Catalyst Discovery

Key Methodology: A Variational Autoencoder (VAE) or Graph Neural Network (GNN)-based generator is trained on known catalyst structures (e.g., from the Materials Project, Catalysis-Hub). The model encodes materials into a continuous latent space where proximity correlates with property similarity. A property predictor (a separate neural network) is trained concurrently or subsequently on DFT-calculated adsorption energies or experimental TOFs. In the latent space, one can then traverse towards regions corresponding to optimal predicted properties (e.g., a Brønsted-Evans-Polanyi relation for activity) and decode new, realistic catalyst structures. These are then validated via quick DFT calculations (e.g., using density functional theory with a Hubbard U correction for transition metal oxides) before experimental prioritization. This approach reduces the effective search space by many orders of magnitude, focusing effort on the most promising regions of chemical space.

The discovery of high-performance heterogeneous catalysts is a grand challenge in energy and chemical synthesis. Traditional methods, reliant on trial-and-error and linear hypotheses, are slow and resource-intensive. Generative models offer a paradigm shift by learning the complex, high-dimensional relationships between catalyst structure (defined by its core descriptors) and performance, enabling de novo design. This technical guide deconstructs the three fundamental catalyst descriptors—Active Sites, Supports, and Reaction Environments—which serve as the essential, structured input for training generative models. Accurately encoding these descriptors into machine-readable formats is the critical first step for generative AI to propose novel, viable catalysts with targeted properties.

Core Descriptor Deep Dive

Active Sites

The active site is the localized surface region where reactant adsorption and transformation occur. Its electronic and geometric structure dictates activity and selectivity.

Key Quantitative Descriptors:

- Geometric: Coordination number, site symmetry (e.g., fcc, hcp, top), nearest-neighbor distance, ensemble size (e.g., monoatomic vs. ensemble).

- Electronic: d-band center (εd), d-band width, Bader charge, valence state, work function.

- Energetic: Adsorption energies of key intermediates (e.g., *CO, *O, *N), activation barriers, scaling relations.

Table 1: Common Active Site Descriptors and Typical Ranges for Transition Metals

| Descriptor | Definition/Calculation Method | Typical Range (Example: Pt vs. Cu) | Relevance to Activity |

|---|---|---|---|

| d-band center (εd) | Mean energy of the d-band density of states relative to Fermi level. | Pt(111): ~ -2.5 eV; Cu(111): ~ -3.8 eV | Correlates with adsorbate binding strength; volcano plots. |

| Coordination Number | Number of nearest neighbor metal atoms. | Terrace site: 9; Step site: 7; Kink site: 6 | Lower CN often strengthens binding but can promote poisoning. |

| CO Adsorption Energy | DFT-calculated energy of CO adsorption on a specific site. | Pt(111): ~ -1.5 eV; Cu(111): ~ -0.7 eV | Proxy for binding strength of molecular adsorbates; key for oxidation reactions. |

| Oxygen Binding Energy | DFT-calculated energy of atomic O adsorption. | Pt(111): ~ -3.9 eV; Au(111): ~ -1.2 eV | Central descriptor for ORR, OER; follows scaling relations with *OH. |

Experimental Protocol for Active Site Characterization (X-ray Absorption Spectroscopy - XANES/EXAFS):

- Sample Preparation: Catalyst powder is uniformly loaded into a sample holder (e.g., a 1-mm capillary or a pellet) to achieve an optimal absorption edge step (~1).

- Data Collection: Synchrotron X-rays are tuned across the absorption edge of the active metal (e.g., Pt L3-edge). Fluorescence or transmission mode is used.

- XANES Analysis: The near-edge region is analyzed to determine the average oxidation state and electronic structure via comparison to foil and reference compound spectra.

- EXAFS Analysis: The oscillatory part is extracted and Fourier-transformed to obtain a radial distribution function. Fitting with theoretical paths yields:

- Coordination numbers for each shell (nearest neighbors).

- Interatomic distances.

- Debye-Waller factors (disorder).

- Quantification: Statistical fitting (e.g., using DEMETER/IFEFFIT software) provides quantitative descriptor values for the active site.

Supports

The support material stabilizes active phase nanoparticles, influences their morphology and electronic structure, and can participate in the reaction via spillover or direct adsorption.

Key Quantitative Descriptors:

- Structural: Surface area (BET), pore size distribution, crystallographic phase, defect density (e.g., oxygen vacancies in oxides).

- Electronic: Fermi level position, acidity/basicity (isoelectric point), band gap (for semiconductors), work function.

- Interaction Strength: Metal-Support Interaction (MSI) energy, adhesion energy, charge transfer quantified by XPS shifts.

Table 2: Common Catalyst Support Materials and Their Descriptors

| Support Material | Key Structural Descriptor (BET S.A.) | Key Electronic Descriptor | Primary Function & Impact on Active Site |

|---|---|---|---|

| Carbon Black (Vulcan XC-72) | ~250 m²/g | Conductivity, variable surface groups | High dispersion, conductive. Weak MSI. |

| γ-Alumina (Al₂O₃) | 150-300 m²/g | Lewis acidity (Al³⁺ sites) | Stabilizes NPs, acidic sites can modify reaction pathways. |

| Ceria (CeO₂) | 50-150 m²/g | Oxygen vacancy formation energy | Provides oxygen storage/release; strong SMSI can encapsulate NPs. |

| Titania (TiO₂) | 50-100 m²/g | n-type semiconductor, reducible | Strong Metal-Support Interaction (SMSI) under reduction, altering activity. |

| Silica (SiO₂) | 200-800 m²/g | Inert, weakly acidic silanols | High S.A. for dispersion; largely inert, isolates NP effects. |

Experimental Protocol for Measuring Metal-Support Interaction (Temperature-Programmed Reduction - TPR):

- Setup: ~50 mg of catalyst is loaded into a U-shaped quartz reactor.

- Pretreatment: The sample is purged with an inert gas (Ar) at 150°C to remove physisorbed water.

- Reduction: A flow of 5% H₂/Ar is passed over the sample while the temperature is ramped linearly (e.g., 10°C/min) to 800°C.

- Detection: A thermal conductivity detector (TCD) measures the H₂ consumption in the effluent gas.

- Analysis: Reduction peak temperatures are identified. A lower temperature peak indicates easier reduction of the active metal oxide, while higher temperature peaks can signify reduction of the support or metal species strongly interacting with the support (e.g., Ni ions incorporated into an alumina lattice). The peak area quantifies reducible species.

Reaction Environment

The conditions under which the catalyst operates dynamically reshape the active site and support, making in-situ/operando characterization critical.

Key Quantitative Descriptors:

- Chemical Environment: Partial pressures of reactants/products, pH (for electrochemistry), solvent identity and polarity.

- Physical Conditions: Temperature, pressure, potential (for electrocatalysis), flow rate.

- Dynamic State: Coverage of intermediates under reaction conditions, surface reconstruction, oxidation state change.

Table 3: Impact of Reaction Environment on Core Descriptors

| Environmental Variable | Typical Range | Impact on Active Site | Impact on Support |

|---|---|---|---|

| Temperature | 300 K - 1200 K | Alters adsorbate coverage, induces reconstruction, sintering. | Can phase change, sinter, or modulate vacancy concentration. |

| Potential (Electrochem) | -1.0 to 2.0 V vs. RHE | Changes oxidation state, adsorbate binding via field effects. | Can corrode (C), reduce (oxide), or alter conductivity. |

| Acidic vs. Basic Electrolyte | pH 0 - 14 | Stabilizes different intermediates (e.g., *O vs. *OH), may leach metal. | May dissolve (e.g., SiO₂ in base), alter surface charge. |

| Reducing/Oxidizing Gas | pO₂ from 10⁻³⁵ to 1 bar | Sets metal oxidation state and surface termination (oxide vs. metal). | Determines redox state (e.g., Ce³⁺/Ce⁴⁺ ratio in ceria). |

Experimental Protocol for Operando Raman Spectroscopy:

- Reactor Cell: Catalyst is placed in a specially designed operando cell that allows control of gas/liquid flow, temperature, and potential while providing optical access.

- Conditioning: The catalyst is brought to the desired reaction conditions (e.g., 1 bar CO+O₂, 300°C).

- Simultaneous Measurement: Raman spectra are continuously collected (laser excitation, e.g., 532 nm) while the catalytic activity is measured via an online mass spectrometer or gas chromatograph.

- Data Correlation: Spectral features (e.g., metal-oxygen vibrations, carbonaceous species bands) are tracked over time and directly correlated with catalytic turnover rates.

- Descriptor Extraction: Identifies the true active phase under reaction (e.g., surface oxide vs. metallic) and the presence/coverage of key intermediates or poisons.

Visualization of Descriptor Interplay and Generative AI Workflow

Diagram Title: Generative AI for Catalyst Discovery

Diagram Title: AI-Driven Catalyst Discovery Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials and Reagents for Catalyst Research

| Item | Function in Research | Example Use-Case |

|---|---|---|

| Metal Precursors | Source of the active metal for synthesis. | Chloroplatinic acid (H₂PtCl₆) for Pt nanoparticle impregnation. |

| High-Surface-Area Supports | Provide a scaffold for nanoparticle dispersion. | Alumina (Al₂O₃) spheres, Carbon Black (Vulcan XC-72R). |

| Structure-Directing Agents | Control nanoparticle morphology during synthesis. | Cetyltrimethylammonium bromide (CTAB) for shape-controlled Pt synthesis. |

| Reducing Agents | Convert metal precursors to zero-valent nanoparticles. | Sodium borohydride (NaBH₄), ethylene glycol (polyol synthesis). |

| Probe Molecules for Characterization | Chemisorb to active sites to quantify and qualify them. | CO for IR spectroscopy, N₂ for BET surface area, H₂ for chemisorption. |

| Calibration Gas Mixtures | Standardize analytical equipment for performance testing. | 1% CO/He for pulse chemisorption; 1% H₂/Ar for TPR. |

| Electrolyte Solutions | Provide ionic conductivity and define pH in electrocatalysis. | 0.1 M Perchloric acid (HClO₄) for acidic ORR/OER studies. |

| Operando Cell Components | Enable characterization under realistic reaction conditions. | X-ray transparent Be windows; high-temp Raman cells with gas flow. |

| Computational Software & Pseudopotentials | Enable DFT calculation of descriptor values. | VASP, Quantum ESPRESSO; PBE functional, PAW pseudopotentials. |

This in-depth guide explores the core generative AI models—Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Diffusion Models—and their transformative role in heterogeneous catalyst discovery research. The discussion is framed within a broader thesis on how these models generate novel, high-performance catalytic materials by learning complex distributions from chemical and structural data.

Core Generative Models: Architectures and Mechanisms

Generative models learn the underlying probability distribution of training data to create new, plausible samples. For catalyst discovery, this data includes chemical compositions, crystal structures, adsorption energies, and reaction descriptors.

Variational Autoencoders (VAEs)

VAEs are probabilistic models consisting of an encoder and a decoder. The encoder compresses input data (e.g., a molecular graph or crystal formula) into a latent vector z sampled from a learned distribution (typically Gaussian). The decoder reconstructs the data from this latent vector. The training objective combines reconstruction loss with a Kullback-Leibler (KL) divergence term that regularizes the latent space, ensuring smooth interpolation.

Key Application in Catalysis: VAEs can generate novel molecular fragments or catalyst surfaces by sampling from the continuous latent space, enabling the exploration of chemical spaces near known high-performance materials.

Generative Adversarial Networks (GANs)

GANs employ a two-network adversarial framework: a Generator (G) creates candidate samples from noise, and a Discriminator (D) evaluates whether samples are real (from training data) or fake (from G). Through iterative training, G learns to produce data indistinguishable from real catalytic materials.

Key Application in Catalysis: GANs have been used to generate hypothetical porous material structures and alloy nanoparticles with targeted properties like surface area or coordination numbers.

Diffusion Models

Diffusion models work by a forward and reverse process. The forward process gradually adds Gaussian noise to training data over many steps until it becomes pure noise. The reverse process trains a neural network (typically a U-Net) to denoise, learning to recover the original data. For generation, the model starts with random noise and iteratively denoises it.

Key Application in Catalysis: Diffusion models show promise in generating atomic coordinates for complex bimetallic clusters or defect-laden surfaces, as they excel at capturing complex, high-fidelity distributions.

Quantitative Comparison of Generative Models

Table 1: Comparative Analysis of Generative AI Models for Catalyst Design

| Feature | VAE | GAN | Diffusion Model |

|---|---|---|---|

| Training Stability | High, convex loss | Low, prone to mode collapse | High, but computationally intensive |

| Sample Diversity | Good, but can produce blurry samples | Can be high if training converges | Excellent, high-quality outputs |

| Latent Space | Continuous, interpretable, interpolatable | Often discontinuous, less interpretable | Typically not directly accessible |

| Primary Catalyst Use Case | Exploring continuous property optimizations | Generating novel structural motifs | High-fidelity inverse design of surfaces |

| Example Metric (from literature) | ~75% validity for generated organic molecules | ~50-80% novelty for generated MOFs | >90% structural stability for generated crystals |

Table 2: Performance Benchmarks on Catalyst-Relevant Tasks (Hypothetical Data from Recent Studies)

| Model Type | Task | Success Rate (%) | Property Prediction RMSE (eV) | Computational Cost (GPU hrs) |

|---|---|---|---|---|

| VAE | Composition Generation for Oxidation Catalysts | 68 | 0.15 | 120 |

| GAN (Wasserstein) | Porous Material Structure Generation | 82 | 0.22 | 250 |

| Conditional Diffusion | Transition State Geometry Generation | 91 | 0.08 | 950 |

Experimental Protocols for Generative AI in Catalyst Discovery

Protocol 1: Training a Conditional VAE for Dopant Prediction Objective: Generate novel doped perovskite compositions (ABO₃) for oxygen evolution reaction (OER).

- Data Curation: Assemble a dataset of known perovskites with OER activity from ICSD and Catalysis-Hub. Features include elemental descriptors (electronegativity, ionic radius), formation energy, and band gap.

- Model Architecture: Implement an encoder with 3 dense layers (256 neurons) mapping to latent mean and variance vectors (dim=32). Decoder mirrors encoder. A condition vector (desired adsorption energy range) is concatenated to the latent vector.

- Training: Use Adam optimizer (lr=1e-4). Loss = MSE(reconstruction) + β * KL-divergence. Train for 1000 epochs with batch size 64.

- Validation: Assess validity via a separate classifier trained on crystal stability rules. Evaluate property prediction accuracy with a DFT surrogate model.

Protocol 2: Deploying a GAN for Nanoparticle Morphology Generation Objective: Generate 3D atomic structures of Pt-Co nanoparticles.

- Data Preparation: Use molecular dynamics simulations to create a library of nanoparticle structures (1-5 nm). Voxelize structures into 3D grids encoding atom type and occupancy.

- GAN Setup: Use a 3D convolutional generator. The discriminator is also 3D convolutional. Implement Wasserstein loss with gradient penalty (WGAN-GP) for stability.

- Conditioning: Condition the GAN on target properties like Co composition (%) or average coordination number via embedding layers.

- Evaluation: Use radial distribution function (RDF) analysis to compare generated structures with physical benchmarks. Perform energy minimization via DFT to check stability.

Protocol 3: Inverse Design with a Latent Diffusion Model Objective: Inverse design of supported metal catalyst surfaces for specific adsorption energies.

- Forward Process: Define a noise schedule over 1000 steps to gradually corrupt graph representations of surfaces (atoms as nodes, bonds as edges).

- Denoising Network: Employ a graph neural network (GNN) as the denoiser. The network takes a noisy graph and timestep as input.

- Conditioning: The model is conditioned on a descriptor vector of target properties (e.g., CO adsorption energy = -1.2 eV, O* binding energy = 1.8 eV).

- Sampling: Generate new surface structures by sampling random noise and running the reverse denoising process guided by the condition vector. Validate outputs with ab initio thermodynamics.

Visualizing Generative Workflows for Catalysis

Diagram 1: Conditional VAE workflow for catalyst generation.

Diagram 2: GAN adversarial training for catalyst generation.

Diagram 3: Diffusion model process for catalyst inverse design.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Generative AI in Catalysis

| Item / Software | Function / Role in Generative Workflow | Example in Catalyst Discovery |

|---|---|---|

| PyTorch / TensorFlow | Deep Learning Frameworks | Building and training the neural network architectures (VAE, GAN, Diffusion). |

| ASE (Atomic Simulation Environment) | Atomistic Modeling Toolkit | Processing catalyst structures, calculating basic descriptors, and interfacing with DFT codes. |

| RDKit | Cheminformatics Library | Handling molecular representations (SMILES, graphs) for molecular catalyst generation. |

| Pymatgen | Python Materials Genomics | Processing crystalline materials data (CIF files), generating composition/structural features. |

| Catalysis-Hub | Database | Source of experimental and computational reaction energetics for training and validation. |

| Gaussian/ORCA/VASP | Electronic Structure Codes | Performing DFT calculations to validate the stability and activity of generated catalysts. |

| OCP (Open Catalyst Project) | Pre-trained Models | Using transfer learning for property prediction to guide or condition the generative model. |

| Docker/Singularity | Containerization | Ensuring reproducible computational environments for complex model training pipelines. |

Why Generative Models? Moving Beyond High-Throughput Screening toDe NovoDesign

The discovery of heterogeneous catalysts has long been constrained by the Edisonian approach of high-throughput screening (HTS), which explores a limited, pre-defined chemical space. This is inherently inefficient for the vast, complex multi-dimensional space governing catalyst performance (e.g., composition, structure, surface morphology). Generative models represent a paradigm shift, enabling de novo design—the intelligent creation of novel, optimal catalyst candidates from scratch. Framed within the thesis of accelerating catalyst discovery, these models learn the underlying probability distribution of known catalytic materials and their properties to generate new, plausible, and high-performing structures.

How Generative Models Work: Core Architectures for Catalyst Design

Generative models for catalyst discovery are trained on databases like the Materials Project, Catalysis-Hub, or NOMAD. They encode complex relationships between elemental composition, crystal structure, and catalytic properties (e.g., adsorption energies, activity, selectivity).

Key Architectures:

- Variational Autoencoders (VAEs): Encode a material (e.g., via crystal graph) into a latent space distribution. Sampling from this space and decoding produces new structures. Conditional VAEs can generate materials targeting specific property values.

- Generative Adversarial Networks (GANs): A generator creates candidate materials, while a discriminator evaluates their plausibility against real data. Their adversarial training pushes the generator to produce increasingly realistic candidates.

- Flow-Based Models: Learn invertible transformations between the complex data distribution and a simple base distribution (e.g., Gaussian), allowing for exact likelihood calculation and efficient sampling.

- Diffusion Models: Gradually add noise to training data and learn to reverse this process, enabling the generation of high-fidelity, novel structures from noise.

Quantitative Data: Generative Models vs. Traditional HTS

Table 1: Comparative Performance Metrics in Catalyst Discovery Workflows

| Metric | High-Throughput Screening (HTS) | Generative Model-Driven Design |

|---|---|---|

| Exploration Rate | ~10²-10⁴ candidates per cycle | ~10⁵-10⁶ candidates in latent space |

| Success Rate | Typically <1% hit rate | Can exceed 10% for targeted properties |

| Design Cycle Time | Months (synthesis → test → analyze) | Days (in-silico generation → downselection) |

| Chemical Space Coverage | Limited to pre-synthesized libraries | Expands beyond known libraries, truly novel |

| Primary Cost Driver | Physical experimentation & logistics | Computational resources & data curation |

Table 2: Published Results from Generative Catalyst Design Studies

| Study Focus (Year) | Model Type | Key Outcome | Validation |

|---|---|---|---|

| OER Catalysts (2023) | Conditional VAE | Generated 50 novel ternary metal oxides; 3 predicted candidates showed overpotential < 0.4V via DFT. | DFT validation; 1 synthesized and tested. |

| CO2 Reduction (2024) | Diffusion Model | Designed 120 unique bimetallic alloys; identified 12 with *COOH binding energy in optimal range (±0.2 eV). | High-throughput DFT screening confirmed predictions. |

| Methane Activation (2022) | Graph-Based GAN | Proposed 15 new perovskite compositions; 4 exhibited methane conversion probability >2x baseline. | Microkinetic modeling and 2 experimental syntheses. |

Experimental & Computational Protocols

Protocol 1: Training a Conditional Crystal Diffusion Model for Alloy Design

- Data Curation: Assemble a dataset of known alloys (e.g., from ICSD) with associated properties (e.g., d-band center, formation energy). Represent each crystal as a graph (atoms=nodes, bonds=edges).

- Noising Process: Define a forward diffusion process that gradually adds Gaussian noise to the node (atom type) and edge (bond) features over T timesteps.

- Model Training: Train a neural network (e.g., Equivariant GNN) to reverse the noising process. Condition the model on target property values (e.g., optimal adsorption energy).

- Sampling: Generate candidates by sampling random noise and iteratively denoising it using the trained model, guided by the condition.

- Filtering & Validation: Pass generated structures through stability filters (e.g., based on formation energy). Validate top candidates with Density Functional Theory (DFT) calculations.

Protocol 2: Validating Generative Model Outputs with High-Throughput DFT

- Structure Relaxation: Use DFT (VASP, Quantum ESPRESSO) to relax the generated crystal structure, optimizing atomic positions and cell volume.

- Property Prediction: Calculate key catalytic descriptors:

- Adsorption energies of key intermediates (e.g., *CO, *OOH).

- Surface energy and thermodynamic stability.

- Electronic structure properties (d-band center, density of states).

- Activity Mapping: Map descriptors to activity volcanoes (e.g., for OER, HER, CO2RR). Rank candidates by proximity to the volcano peak.

- Experimental Prioritization: Select top-ranked, synthetically accessible candidates for wet-lab synthesis and testing.

Visualizing the Workflow

Title: Generative Model Catalyst Discovery Workflow

Title: Conditional VAE for Targeted Catalyst Generation

Table 3: Key Research Reagent Solutions for Generative Catalyst Research

| Item | Function in Generative Catalyst Discovery |

|---|---|

| VASP / Quantum ESPRESSO | First-principles DFT software for calculating formation energies, adsorption energies, and electronic structures of generated candidates. Essential for validation. |

| Pymatgen / ASE | Python libraries for manipulating, analyzing, and standardizing crystal structures. Crucial for data preprocessing and post-processing model outputs. |

| MATERIALS PROJECT API | Provides programmatic access to a vast database of computed material properties. Used for training data and benchmarking. |

| OCP (Open Catalyst Project) | Provides datasets, benchmarks, and ML models specifically for catalyst discovery. Includes Graph Neural Network force fields. |

| CatBERT / ChemBERTa | Pre-trained transformer models on chemical literature or SMILES strings. Can be fine-tuned for property prediction or used as molecular descriptors. |

| High-Purity Metal Salts / Precursors | For sol-gel, hydrothermal, or impregnation synthesis of predicted oxide or alloy catalysts in the validation phase. |

| Plug-and-Play GC/MS/HPLC Systems | For rapid experimental characterization of catalyst activity, selectivity, and stability in test reactions (e.g., CO2 reduction, methane oxidation). |

The application of generative models to heterogeneous catalyst discovery research represents a paradigm shift from high-throughput screening to intelligent, design-led exploration. This paradigm relies fundamentally on large-scale, high-quality data for training, validation, and benchmarking. Three pivotal resources—Catalysis-Hub, the Materials Project, and the Open Catalyst 2020 (OC20) dataset—form the essential data infrastructure that enables generative AI to propose novel, stable, and active catalytic materials. This whitepaper provides a technical guide to these resources, detailing their structure, access, and integration into generative workflows.

Catalysis-Hub

Catalysis-Hub.org is a community-driven repository for surface science and catalysis data, specializing in experimentally measured and computationally derived catalytic reaction energies and barriers.

Core Data Schema and Access

Data is stored primarily as Surface Science Informatics (SSI) JSON files, containing calculated adsorption energies, transition states, reaction energies, and vibrational frequencies for a wide range of surface reactions. The underlying electronic structure calculations are typically performed using Density Functional Theory (DFT).

Quantitative Summary of Catalysis-Hub Data:

| Data Category | Approximate Count (as of 2024) | Key Descriptors |

|---|---|---|

| Adsorption Energies | > 100,000 entries | Molecule, surface facet, adsorption site, DFT functional, energy |

| Reaction Energies | > 20,000 reactions | Reactants, products, catalyst material, reaction energy, barrier |

| Elemental Surfaces | ~70 pure metals & bimetallics | Crystal structure, lattice constant, Miller indices |

| Reaction Networks | For key processes (e.g., NH3 synthesis, CO2 reduction) | Microkinetic modeling parameters |

Experimental/Computational Protocol for Cited Data

A standard DFT calculation protocol from the repository is summarized below:

- Geometry Optimization: Use the VASP or Quantum ESPRESSO code with a plane-wave basis set.

- Exchange-Correlation Functional: Employ the RPBE functional, often with a DFT-D3 dispersion correction.

- Slab Model: Create a periodic slab model (≥ 3 atomic layers) with a vacuum layer ≥ 15 Å. Fix bottom 1-2 layers.

- Brillouin Zone Sampling: Use a Monkhorst-Pack k-point grid with a density of at least 0.04 Å⁻¹.

- Convergence: Set electronic energy convergence to 10⁻⁵ eV and ionic force convergence to 0.03 eV/Å.

- Energy Reference: Calculate adsorption energy as E(adsorbate/slab) – E(slab) – E(adsorbate_gas).

Materials Project

The Materials Project (MP) is a comprehensive database of calculated properties for over 150,000 inorganic compounds and 1,000,000+ materials derived from them, generated via high-throughput DFT using a consistent computational framework.

Core Data for Catalyst Discovery

MP provides foundational bulk crystal structures and properties essential for identifying stable catalyst candidates. Key data includes formation energy, band structure, elastic tensor, and thermodynamic stability (phase diagram).

Quantitative Summary of Key Materials Project Data:

| Property Category | Number of Entries | Relevance to Catalysis |

|---|---|---|

| Crystalline Materials | > 150,000 | Primary source of bulk structures for surface generation |

| Theoretical Phase Diagrams | > 70,000 systems | Predicts thermodynamic stability under varying chemical potentials |

| Electronic Structure | Band gaps for ~80,000 materials | Informs on conductivity & potential for electron transfer |

| Surface Energies | For high-symmetry facets of common materials | Estimates surface stability and morphology |

Workflow for Integrating MP Data into Generative Models

Generative models often use MP as a source of "seed" structures or for stability validation.

Diagram Title: Validating Generative Model Outputs with Materials Project

Open Catalyst 2020 (OC20)

The OC20 dataset, released by Meta AI (FAIR), is explicitly designed for machine learning in catalysis. It contains over 1.3 million DFT relaxations of adsorbate-catalyst systems, providing atomic structures, initial and relaxed states, and total energies.

Dataset Structure and Significance

OC20 is structured for direct use in training graph neural networks (GNNs) and other ML models to predict relaxed structures and energies, bypassing expensive DFT.

Quantitative Summary of the OC20 Dataset:

| Split | Number of Systems | Description |

|---|---|---|

| Training (Total) | ~1,140,000 | Diverse adsorbates on varied surfaces |

| ID | 460,000 | In-distribution data for validation |

| OOD Ads | 460,000 | New adsorbates, known surfaces |

| OOD Cat | 460,000 | New catalyst materials, known adsorbates |

| OOD Both | 87,000 | New adsorbates on new catalysts |

Key ML Task Protocol

The primary task is Structure to Energy and Forces (S2EF) prediction: given an initial adsorbate/slab configuration, predict the final relaxed energy and per-atom forces.

Diagram Title: OC20 S2EF Task for ML Model Training

The Scientist's Toolkit: Essential Research Reagent Solutions

| Tool / Resource | Category | Function in Catalyst Discovery Research |

|---|---|---|

| VASP / Quantum ESPRESSO | Software | Performs first-principles DFT calculations to generate reference data for energies and structures. |

| ASE (Atomic Simulation Environment) | Python Library | Manipulates atoms, interfaces with DFT codes, and builds computational workflows. |

| Pymatgen | Python Library | Analyzes materials data, interfaces with MP API, and handles crystal structures. |

| OCP (Open Catalyst Project) Codebase | ML Framework | Provides trained models and tools to run ML-driven relaxations on new catalyst systems. |

| CatHub API / MP API | Web API | Programmatically queries reaction energies (CatHub) or bulk material properties (MP). |

| RDKit | Chemistry Library | Handles molecular representations (SMILES, 3D) for adsorbate generation and featurization. |

| PyTorch Geometric | ML Library | Builds and trains graph neural network models on atomic systems (OC20). |

| SLURM / HPC Cluster | Infrastructure | Manages computational jobs for large-scale DFT or ML model training. |

Building Your AI Catalyst Generator: Methodologies and Real-World Applications

The systematic discovery of heterogeneous catalysts is a grand challenge in chemical engineering and materials science. This whitepaper explores a modern workflow architecture designed to accelerate this discovery, framed within a central thesis: Generative models act as intelligent, hypothesis-generating engines that guide and are refined by first-principles simulations (DFT) and mechanistic kinetics (Microkinetic Modeling), creating a closed-loop, iterative design cycle for novel catalytic materials.

Foundational Pillars: DFT and Microkinetic Modeling

Density Functional Theory (DFT): The Electronic Structure Workhorse

DFT provides quantum-mechanical calculations of adsorption energies, activation barriers, and electronic properties. It is the primary source of energetic parameters for microkinetic models.

Experimental Protocol (Standard DFT Calculation for Adsorption Energy):

- Geometry Optimization: Optimize the clean catalyst slab/surface model using a conjugate gradient algorithm until forces are < 0.01 eV/Å.

- Molecule Optimization: Optimize the gas-phase adsorbate (e.g., CO, O₂, H₂) in a large, periodic box.

- Adsorption Site Sampling: Place the adsorbate on high-symmetry sites (e.g., atop, bridge, hollow) of the optimized slab.

- Slab+Adsorbate Optimization: Re-optimize the combined system, allowing surface atoms in the top two layers to relax.

- Energy Calculation: Calculate the adsorption energy (E_ads) using:

E_ads = E_(slab+adsorbate) - E_slab - E_adsorbate. A more negative value indicates stronger binding. - Frequency Calculation: Perform vibrational analysis to confirm a true minimum (no imaginary frequencies) and to extract zero-point energy corrections and thermodynamic properties.

Microkinetic Modeling (MKM): From Elementary Steps to Macroscopic Rates

MKM constructs a network of elementary reaction steps (derived from DFT or literature), uses DFT-derived energetics as inputs, and solves a set of coupled differential equations to predict steady-state reaction rates, turnover frequencies (TOF), and surface coverages.

Experimental Protocol (Building a Microkinetic Model):

- Define Reaction Network: List all plausible elementary steps (adsorption, dissociation, diffusion, reaction, desorption) for the catalytic cycle.

- Parameterize Rate Constants: For each step i, calculate the rate constant. For a reaction:

k_i = (k_B T / h) * exp(-ΔG‡_i / k_B T). For adsorption:k_ads = A * S₀ * exp(-E_act / k_B T), where A is the pre-exponential factor, and S₀ is the sticking coefficient. ΔG‡ and E_act are from DFT. - Write Mass Balance Equations: Formulate ODEs for the time evolution of surface intermediate coverages (θ_j) and gas-phase species.

- Solve for Steady State: Numerically integrate the ODEs until

dθ_j / dt = 0for all j, or solve the resulting algebraic equations. - Calculate Output Metrics: Compute TOF, product selectivity, and apparent activation energy from the steady-state solution.

Table 1: Quantitative Data from a Prototypical CO Oxidation Catalysis Workflow (Pt(111) Example)

| Component | Parameter | Value (DFT-PBE) | Value (Experimental Range) | Unit |

|---|---|---|---|---|

| Adsorption Energy | CO (atop) | -1.45 | -1.3 to -1.5 | eV |

| Adsorption Energy | O₂ (dissociative) | -0.98 (per O atom) | -0.9 to -1.1 | eV |

| Activation Barrier | CO + O → CO₂ (Langmuir-Hinshelwood) | 0.85 | 0.7 - 1.0 | eV |

| Microkinetic Output | TOF at 500 K | 2.3 x 10² | 10¹ - 10³ | s⁻¹ |

| Microkinetic Output | Dominant Surface Coverage | θ_CO = 0.65 | θ_CO ~ 0.5-0.7 | ML |

The Generative AI Catalyst

Generative models learn the joint probability distribution P(X, y) over existing catalyst data (compositions, structures, properties) and can propose novel candidates with targeted property values.

Key Model Types & Protocol:

- Variational Autoencoders (VAEs): Learn a compressed, continuous latent space of catalyst representations (e.g., from elemental fractions or graph structures). Novel compositions are generated by sampling from this latent space and decoding.

- Training Protocol: Train on datasets like CatHub or NOMAD using a reconstruction loss (MSE) and a Kullback-Leibler divergence loss to regularize the latent space.

- Generative Adversarial Networks (GANs): A generator network creates candidate catalysts, while a discriminator network tries to distinguish them from real catalysts in the training data.

- Training Protocol: Adversarial training until the generator produces candidates the discriminator can no longer reliably identify as fake.

- Graph Neural Networks (GNNs) as Generators: Directly generate crystal graphs or molecular structures atom-by-atom.

- Conditional Generation: Models are conditioned on target properties (e.g., "high TOF for CO oxidation," "low methane selectivity"), guiding the search toward desired regions of chemical space.

Integrated Workflow Architecture

The power lies in the integration of these components into a cohesive, iterative loop.

Diagram 1: Closed-loop catalyst design workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital & Computational Research Tools

| Tool / Solution | Category | Function in Workflow |

|---|---|---|

| VASP, Quantum ESPRESSO | DFT Software | Performs electronic structure calculations to obtain adsorption energies, barriers, and vibrational frequencies. |

| ASE (Atomic Simulation Environment) | Computational Framework | Scripts and automates DFT workflows, handles structure manipulation, and serves as an interface to multiple DFT codes. |

| CatMAP, Kinetix | Microkinetic Modeling | Solves microkinetic models using mean-field approximations, automates sensitivity analysis, and visualizes results. |

| PyTorch, TensorFlow | ML Framework | Provides libraries for building and training generative AI models (VAEs, GANs, GNNs). |

| MatErials Graph Network (MEGNet) | Pre-trained Model | Provides learned representations for materials that can be used as inputs or for transfer learning in generative tasks. |

| CatHub, NOMAD, Materials Project | Database | Curated repositories of DFT-calculated materials properties used for training generative models and benchmarking. |

| FireWorks, AiiDA | Workflow Manager | Orchestrates and manages the execution of complex, multi-step computational workflows across compute resources. |

| pymatgen | Materials Analysis | Python library for generation, analysis, and transformation of crystal structures and computational input files. |

Detailed Workflow Logic & Data Flow

Diagram 2: Iterative model refinement loop

This integrated workflow architecture represents a paradigm shift from empirical, trial-and-error catalyst discovery to a principled, accelerated design cycle. Generative AI proposes novel hypotheses, which are rigorously validated through the coupled first-principles lens of DFT and microkinetic modeling. The resulting data feedback refines the generative model, creating a virtuous cycle. This closed loop directly addresses the core thesis, demonstrating how generative models function not as black-box predictors, but as adaptive discovery engines within a rigorous physical chemistry framework, poised to uncover the next generation of heterogeneous catalysts.

The discovery of novel heterogeneous catalysts is a grand challenge in materials science and chemical engineering. Within a broader thesis on how generative models accelerate this discovery, a fundamental pillar is the effective representation of catalytic materials. Generative models for catalyst design—whether variational autoencoders (VAEs), generative adversarial networks (GANs), or diffusion models—require a meaningful, continuous, and information-rich latent space from which to sample. This latent space is constructed by encoding diverse catalyst representations, including molecular graphs, SMILES strings, and crystallographic data. The fidelity, generalizability, and physical relevance of the generated candidates are directly tied to the quality of these input encodings. This whitepaper provides an in-depth technical guide to state-of-the-art representation learning techniques for catalytic materials, forming the critical data foundation for subsequent generative modeling.

Core Representation Modalities & Encoding Techniques

SMILES (Simplified Molecular Input Line Entry System) Encoding

SMILES strings provide a compact, text-based representation of molecular catalysts or ligands.

- Challenges: Sequence sensitivity (different SMILES for same molecule), syntactic validity.

- Modern Encoders:

- Character/Token-based RNNs & LSTMs: Treat SMILES as a sequence; prone to invalid output generation.

- Transformer-based Models (e.g., ChemBERTa, SMILES-BERT): Apply self-attention to tokenized SMILES, capturing long-range dependencies and learning contextualized embeddings. Pre-trained on large corpora (e.g., PubChem).

- Syntax-Aware Encoders: Use parse trees or rule-based tokenization to ensure grammatical integrity.

Graph-Based Encoding

Catalyst molecules and surface adsorbate complexes are inherently graph-structured (atoms as nodes, bonds as edges).

- Graph Neural Networks (GNNs): The standard for learning over graph structures.

- Message Passing Neural Networks (MPNNs): Aggregate information from neighboring nodes and edges. Update node representations iteratively.

- Graph Attention Networks (GATs): Use attention mechanisms to weigh the importance of neighboring nodes.

- Graph Isomorphism Networks (GINs): Provably as powerful as the Weisfeiler-Lehman graph isomorphism test, suitable for capturing subtle topological differences.

- 3D Graph Convolutions: For geometric and stereochemical information, models like SchNet, DimeNet, and SphereNet incorporate atomic distances, angles, or directional information directly into the message-passing scheme.

Crystallographic & Periodic Structure Encoding

Bulk catalysts, oxides, alloys, and metal-organic frameworks (MOFs) require modeling of periodic, infinite crystals.

- Voxel Grids: Discretize the unit cell into a 3D grid of electron density or atomic density. Process with 3D Convolutional Neural Networks (3D-CNNs). Computationally expensive.

- Graph-Based Approaches (Crystal Graphs): Represent the crystal as a multigraph where atoms are nodes, and edges are created between atoms within a cutoff radius (e.g., Crystal Graph Convolutional Neural Network (CGCNN)). Effectively handles periodicity.

- SO(3)-Equivariant Networks: Models like E(3)-Equivariant GNNs respect the Euclidean symmetries (translation, rotation, inversion) inherent in 3D space, leading to more data-efficient and physically correct representations.

Quantitative Comparison of Encoding Methods

Table 1: Performance Comparison of Encoding Methods on Catalyst Property Prediction Tasks (e.g., OC20 Dataset)

| Encoding Method | Model Architecture | Target Property (Example) | Mean Absolute Error (MAE) | Key Advantage | Computational Cost |

|---|---|---|---|---|---|

| SMILES (Tokenized) | Transformer (BERT) | Adsorption Energy | ~0.8 - 1.2 eV | Simple, leverages NLP advances | Low-Medium |

| 2D Molecular Graph | MPNN/GIN | Formation Energy | ~0.05 - 0.15 eV/atom | Captures topology & bonds | Medium |

| 3D Molecular Graph | SchNet | HOMO-LUMO Gap | ~0.1 - 0.3 eV | Includes spatial geometry | Medium-High |

| Crystal Graph | CGCNN | Bulk Modulus | ~5 - 15 GPa | Handles periodic materials | Medium |

| Equivariant Graph | MACE/NequIP | Formation Energy | ~0.02 - 0.08 eV/atom | State-of-the-art accuracy | High |

Note: MAE values are illustrative ranges based on recent literature (2023-2024) and are dataset/ task-dependent.

Table 2: Suitability of Encoding Schemes for Different Catalyst Types

| Catalyst Type | Primary Representation | Recommended Encoder | Reason |

|---|---|---|---|

| Organometallic Complex | 3D Molecular Graph | SphereNet, DimeNet | Critical stereochemistry & ligand geometry |

| Supported Metal Nanoparticle | Crystal Graph (Surface Slab) | CGCNN with surface tags | Models periodic slab & adsorption sites |

| Bulk Mixed Metal Oxide | Crystal Graph | ALIGNN (includes angles) | Captures complex ionic bonding networks |

| Zeolite / MOF | Crystal Graph | MOFTransformer (Graph+Attention) | Very large unit cells, long-range pores |

| Molecular Catalyst (Ligand Screen) | SMILES / 2D Graph | ChemBERTa / Attentive FP | Rapid screening of large organic libraries |

Detailed Experimental Protocols for Key Cited Experiments

Protocol 4.1: Training a Crystal Graph Convolutional Network (CGCNN) for Adsorption Energy Prediction

Objective: Train a model to predict the adsorption energy of a CO molecule on a diverse set of metal alloy surfaces.

Materials & Data:

- Dataset: Open Catalyst 2020 (OC20) Dense subset.

- Software: PyTorch, PyTorch Geometric,

pymatgenfor structure analysis.

Procedure:

- Data Preprocessing:

- From each

*.trajfile, extract the initial catalyst structure and final relaxed structure with adsorbate. - Using

pymatgen, create aStructureobject. Define a neighbor cutoff (e.g., 8.0 Å). - Build the crystal graph: Nodes are atoms with features (atomic number, formal charge, etc.). Create edges between all atom pairs within the cutoff. Edge features are Gaussian-expanded distances.

- The target variable

yis the adsorption energy:E(adsorbate+slab) - E(slab) - E(adsorbate_gas).

- From each

Model Training:

- Architecture: Implement CGCNN as per the original paper. Three convolutional layers with sigmoid activation, followed by a pooling layer and fully-connected readout layers.

- Loss Function: Mean Squared Error (MSE).

- Optimizer: Adam with an initial learning rate of 0.01 and a ReduceLROnPlateau scheduler.

- Training: Split data 80/10/10 (train/val/test). Train for 100 epochs, validating after each epoch. Save the model with the lowest validation loss.

Evaluation:

- Report MAE and RMSE on the held-out test set.

- Generate parity plots (predicted vs. DFT-calculated energies).

Protocol 4.2: Fine-Tuning a Pre-trained Molecular Transformer (ChemBERTa) for Catalyst Property Prediction

Objective: Adapt a language model pre-trained on SMILES to predict the turnover frequency (TOF) of molecular organocatalysts.

Procedure:

- Data Preparation:

- Curate a dataset of SMILES strings and associated experimental log(TOF) values.

- Tokenize SMILES using the ChemBERTa tokenizer (R-SMILES format recommended).

- Split data into training and evaluation sets.

Model Setup:

- Load the pre-trained

ChemBERTamodel (e.g., from Hugging Facedeepchem/ChemBERTa-77M-MTR). - Add a regression head (a dropout layer followed by a linear layer) on top of the pooled [CLS] token output.

- Load the pre-trained

Fine-Tuning:

- Use a low learning rate (e.g., 1e-5) to avoid catastrophic forgetting.

- Employ a weighted MSE loss if data is unevenly distributed.

- Train for a limited number of epochs (e.g., 20) with early stopping.

Interpretation:

- Use attention weight visualization to identify which sub-structural motifs (e.g., functional groups) the model attends to for its predictions.

Mandatory Visualizations

Diagram 1: Catalyst Representation Learning Workflow for Generative Models

Diagram 2: Message Passing in a Graph Neural Network (GNN)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Data Resources for Catalyst Representation Learning

| Item Name | Type | Function / Purpose | Key Features (2023-2024) |

|---|---|---|---|

| Open Catalyst Project (OC20/OC22) Datasets | Benchmark Data | Provides massive DFT-relaxed trajectories of adsorbates on surfaces for training and evaluation. | >1.4M relaxations, diverse materials, standard splits. |

| PyTorch Geometric (PyG) | Software Library | Extension of PyTorch for deep learning on graphs and irregular structures. | Efficient GNN layers, easy batching of graphs, extensive model zoo. |

| Deep Graph Library (DGL) | Software Library | Flexible framework for GNNs across multiple backends (PyTorch, TensorFlow). | High performance on large graphs, built-in message-passing primitives. |

| MatDeepLearn | Software Library | Tailored for materials science, includes pre-built crystal graph loaders and models. | Simplified pipeline from pymatgen Structure to trained model. |

pymatgen & ASE |

Python Libraries | Core tools for parsing, analyzing, and manipulating crystal structures (CIF, POSCAR) and molecules. | Universal structure I/O, neighbor analysis, symmetry tools. |

| M3GNet | Pre-trained Model | A universal graph neural network potential for molecules and crystals. | Can be used as a powerful encoder or for direct property prediction. |

| ChemBERTa / MolFormer | Pre-trained Model | Transformer models pre-trained on millions of SMILES/ SELFIES strings. | Provides strong starting embeddings for molecular catalysts. |

| JAX/Equivariant Libraries (e.g., e3nn, MACE) | Software Library & Models | Framework for building SE(3)-equivariant neural networks. | Essential for state-of-the-art accuracy on 3D geometric data. |

The broader thesis posits that generative models are transforming heterogeneous catalyst discovery by moving beyond passive property prediction to active, goal-oriented design. This paradigm shift, termed "conditional generation," involves training models to inversely map from a desired reaction outcome (e.g., high Faradaic efficiency for CO2-to-ethylene, low overpotential for NH3 synthesis via N2 reduction) to candidate catalyst structures and compositions. This technical guide delves into the architectures, training protocols, and validation workflows that operationalize this steering for target catalytic reactions.

Foundational Architectures for Conditional Catalyst Generation

Modern approaches leverage several deep generative model families, conditioned on reaction descriptors.

- Conditional Variational Autoencoders (C-VAE): Encode catalyst representations (e.g., elemental fractions, orbital field matrix descriptors) into a latent space, with conditioning on target performance metrics (TOF, overpotential) or reaction identifiers (CO2RR, NRR). Decoding under specific conditions generates novel candidates.

- Conditional Generative Adversarial Networks (C-GAN): A generator creates candidate catalysts (e.g., as composition vectors or graph structures) conditioned on a target reaction profile, while a discriminator tries to distinguish between generated and real high-performing catalysts from a database.

- Transformer-based Autoregressive Models: Generate catalyst materials token-by-token (e.g., element symbols, site positions) based on a prompt that specifies the target reaction and desired performance constraints.

Table 1: Comparison of Core Conditional Generative Architectures for Catalyst Design

| Architecture | Primary Input (Condition) | Generated Output | Key Advantage | Major Challenge for Catalysis |

|---|---|---|---|---|

| Conditional VAE | Target Reaction & Performance Vector | Continuous Representation (e.g., composition vector) | Smooth latent space allows interpolation. | Can generate unrealistic compositions without careful constraints. |

| Conditional GAN | Target Reaction Label or Vector | Catalyst Structure (e.g., crystal graph) | Can produce highly novel, complex structures. | Training instability; mode collapse limiting diversity. |

| Autoregressive Transformer | Text/Token Prompt (e.g., "High FE for C2H4") | Sequence of tokens defining material | Exceptional flexibility for multi-property conditioning. | Requires large, well-curated training datasets. |

Detailed Experimental & Computational Protocols

Protocol: Training a C-VAE for CO2 Reduction Catalyst Discovery

Objective: To generate novel alloy compositions predicted to yield >70% Faradaic Efficiency (FE) for CO2-to-C2+ products.

Methodology:

- Dataset Curation: Assemble a database of experimentally reported bimetallic and trimetallic catalysts with reported FE for C1 and C2+ products. Features include elemental composition (at.%), bulk modulus, d-band center (calculated), and reaction conditions (pH, potential).

- Conditioning Vector: Construct a condition vector y = [ReactionTarget, MinFE, Max_Overpotential]. For example, for C2+ generation: y = [C2H4, 0.70, -0.4V].

- Model Training:

- Encoder qᵩ(z | x, y) maps catalyst features x and condition y to latent distribution parameters (μ, σ).

- Latent vector z is sampled: z ~ N(μ, σ²).

- Decoder pₚ(x | z, y) reconstructs x from z and y.

- Loss function: L = Lreconstruction(x, x') + β * DKL(N(μ, σ²) || N(0, 1)), where β controls latent space regularization.

- Conditional Generation: For a new condition y', sample a random z from the prior N(0,1) and decode via pₚ(x | z, y') to generate new catalyst feature vectors.

- Validation: Pass generated compositions to a pre-trained property predictor (e.g., a graph neural network for adsorption energy) for rapid screening. Top candidates undergo DFT validation for key intermediate adsorption energies (e.g., *CO, *CHO, *COCO).

Protocol: Active Learning Loop with a Conditional Generator

Objective: Iteratively improve the generator's performance for NH3 synthesis catalysts using high-throughput DFT feedback.

Methodology:

- Initial Generation: A pre-trained conditional generator proposes 100 candidate surfaces (e.g., doped Ru, Fe, or MXenes) conditioned on low onset potential for NRR.

- First-Principles Screening: Candidates undergo automated DFT calculations for critical steps: N₂ adsorption, first protonation (*N₂ + H⁺ + e⁻ → *N₂H), and NH₃ desorption.

- Data Augmentation: The calculated limiting potential (or activity metric) for each candidate is appended to the training database with the condition "Low NRR Overpotential."

- Model Retraining: The conditional generator is retrained on the augmented dataset.

- Iteration: Steps 1-4 are repeated, with each cycle focusing the generator on regions of the chemical space validated by DFT to be promising. The loop typically converges within 3-5 cycles.

Visualization of Core Workflows

Title: AI-Driven Catalyst Discovery Loop

Title: C-VAE Training & Generation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational & Experimental Tools for AI-Steered Catalyst Research

| Category | Item/Software | Function in Conditional Generation Workflow |

|---|---|---|

| Data Curation | Materials Project API, CatHub Database | Provides foundational datasets of crystal structures and experimental catalytic properties for model training. |

| Featureization | DScribe, matminer | Computes material descriptors (e.g., SOAP, Coulomb matrix) from atomic structures for model input. |

| Generative Modeling | PyTorch, TensorFlow with RDKit, MatGL | Frameworks for building and training C-VAEs, C-GANs, and transformer models for molecules and materials. |

| Property Prediction | Graph Neural Networks (MEGNet, ALIGNN), Quantum Espresso, VASP | Fast screening (GNNs) and accurate validation (DFT) of generated catalyst candidates' properties. |

| Active Learning | AmpTorch, COMOCAT | Platforms to automate the iterative loop of generation, DFT calculation, and model retraining. |

| Experimental Validation | High-throughput electrochemical synthesis rig, Online GC/MS, Isotope-Labeled Reactants (¹⁵N₂, ¹³CO₂) | For synthesizing, testing, and unambiguously confirming the activity of AI-predicted catalysts for target reactions. |

| Workflow Management | FireWorks, AiiDA | Orchestrates complex, multi-step computational workflows linking generation, DFT, and analysis. |

The discovery of high-performance heterogeneous catalysts is a multidimensional optimization problem across composition, structure, and operating conditions. Generative models offer a paradigm shift by proposing novel, synthetically accessible materials beyond human intuition. This whitepaper details the technical implementation of active learning loops that close the cycle between generative AI, robotic experimentation, and model retraining, specifically for accelerating heterogeneous catalyst discovery.

Foundational Generative Models in Materials Science

Generative models for catalyst discovery learn the joint probability distribution of atomic configurations and their target properties (e.g., adsorption energy, activation barrier) from existing data. They then sample from this distribution to propose candidates with optimized properties.

Table 1: Key Generative Model Architectures for Catalyst Discovery

| Model Type | Core Mechanism | Catalyst Discovery Application | Key Advantage |

|---|---|---|---|

| Variational Autoencoder (VAE) | Encodes material to latent space; decoder reconstructs/samples. | Generating novel bulk crystal structures and surfaces. | Smooth, interpolatable latent space. |

| Generative Adversarial Network (GAN) | Generator creates candidates; discriminator evaluates authenticity. | Designing nanoparticle alloy compositions. | Can produce highly novel structures. |

| Flow-based Models | Learns invertible transformation between data and simple distribution. | Generating 3D atomic coordinates for molecular catalysts. | Exact latent density estimation. |

| Diffusion Models | Iteratively denoises random noise to form structure. | High-fidelity generation of complex porous catalysts (e.g., MOFs). | State-of-the-art generation quality. |

| Graph Neural Network (GNN)-based | Operates directly on atomistic graphs; uses autoregressive or one-shot decoding. | Generating doped or defected catalyst surfaces. | Natively respects translational invariance and periodicity. |

Core Active Learning Loop Architecture

The active learning loop is a recursive process that integrates computational design with physical validation.

Diagram 1: High-level active learning loop for catalyst discovery.

Acquisition Function: The Selection Engine

The acquisition function balances exploration (uncertain regions of space) and exploitation (high-performance regions). Common functions include:

- Expected Improvement (EI): Maximizes the expected improvement over the current best.

- Upper Confidence Bound (UCB): Selects based on mean prediction plus β * standard deviation.

- Thompson Sampling: Draws a random sample from the posterior model distribution and selects its optimum.

Robotic Experimentation Platform Integration

Automated platforms execute the synthesis, characterization, and testing of candidate catalysts.

Table 2: Key Modules in a Catalysis Robotic Platform

| Module | Function | Example Techniques/Devices | Throughput (Estimated) |

|---|---|---|---|

| Automated Synthesis | Prepares catalyst libraries. | Liquid handling robots, inkjet printing, CVD/PVD automation, sol-gel stations. | 50-200 unique compositions per day. |

| In-Line Characterization | Provides immediate structural/chemical data. | Raman spectroscopy, XRD autosamplers, MS for effluent analysis. | Parallel measurement of 4-16 samples. |

| High-Throughput Testing | Measures catalytic performance. | Multi-channel plug-flow reactors, parallel pressure reactors, photochemical plates. | 16-96 simultaneous reaction channels. |

| Automated Analytics | Processes raw data into model-ready features. | GC/MS/TCD autosamplers, machine vision for product analysis, Python data pipelines. | Minutes per sample batch. |

Detailed Experimental Protocol: Automated Screening of Oxidation Catalysts

Objective: Evaluate a generative model-proposed library of doped metal oxide catalysts for propane oxidative dehydrogenation (ODH).

Synthesis via Robotic Dispensing:

- Precursor Solutions: 0.1M aqueous solutions of host metal nitrates (e.g., V, Mo) and dopant precursors (e.g., Nb, Sb, Te salts).

- Procedure: Using a liquid handling robot (e.g., Hamilton MICROLAB STAR), dispense calculated volumes into wells of a 48-well quartz reactor plate to achieve target compositions (e.g., V0.9Mo0.05Te0.05Ox).

- Drying/Calcination: The plate is transferred via robotic arm to a drying oven (110°C, 2h) followed by a programmable muffle furnace (air, 500°C, 4h).

In-Line Characterization:

- The plate is moved to an automated Raman microscope. Spectra are collected at 3 points per well (532 nm laser).

- A PCA model pre-trained on known phases converts spectra into a "phase purity" score.

Catalytic Testing:

- The plate is sealed into a parallel plug-flow reactor system (e.g., Symyx/Highthroughput Explorer).

- Conditions: 550°C, Feed: C3H8/O2/N2 = 4/8/88, Total flow 20 sccm per channel, atmospheric pressure.

- Analysis: Effluent from each channel is sequentially sampled by a multiposition valve and analyzed by a single GC-TCD/FID every 20 minutes.

Data Pipeline:

- GC peaks are auto-integrated. Conversion (XC3H8) and selectivity to propylene (SC3H6) are calculated.

- Key performance indicator (KPI): Yield (Y = X * S) is appended to each candidate's descriptor vector (composition, phase score).

Model Retraining & Uncertainty Quantification

New experimental data triggers iterative model updates.

Diagram 2: Model retraining and uncertainty quantification pipeline.

Table 3: Retraining Strategies & Impact

| Strategy | Protocol | Computational Cost | Impact on Model |

|---|---|---|---|

| Full Retraining | Train from scratch on entire growing dataset. | High (GPU days) | Most accurate, captures all data trends. |

| Transfer Learning | Start from previous weights, finetune on new data. | Medium (GPU hours) | Efficient, but risk of catastrophic forgetting. |

| Online/Bayesian Updates | Update model parameters sequentially via Bayesian rules. | Low | Enables real-time adaptation, suited for streaming data. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Reagent Solutions for Robotic Catalyst Discovery

| Item/Category | Function | Example Specification/Note |

|---|---|---|

| High-Throughput Reactor Plates | Platform for parallel synthesis and testing. | 48-well quartz or stainless steel plate, each well acts as a micro-reactor. |

| Metal Precursor Libraries | Source of catalytic elements. | 0.1-0.5M nitrate or chloride solutions in dilute nitric acid or water, >99.99% purity. |

| Automated Liquid Handling Tips | Precise transfer of precursor solutions. | Disposable conductive tips, volume range 1 µL - 1 mL. |

| Solid Catalyst Supports | High-surface-area carriers. | Gamma-Al2O3, SiO2, TiO2 powders (100-200 mesh) in automated powder dispensers. |

| Calibration Gas Mixtures | For reactor feed and instrument calibration. | Certified mixtures of C3H8, O2, N2, C3H6, CO2, CO in balance gas. |

| GC Calibration Standards | Quantify reaction products. | Known concentrations of all expected products (alkenes, COx, H2O) in inert solvent. |

| Robotic Arm Grippers | Handle plates between stations. | Custom, heat-resistant grippers for moving reactor plates. |

| Data Pipeline Software | Unify experimental data. | Python scripts with libraries (scikit-learn, PyTorch, RDKit, pymatgen) for automated featurization. |

The discovery of heterogeneous catalysts is a complex, high-dimensional challenge. Generative models, a subset of machine learning, are revolutionizing this field by learning the underlying probability distribution of known materials and proposing novel, stable, and high-performance candidates. This guide explores their application in two promising classes: Single-Atom Alloys (SAAs) and Metal-Organic Frameworks (MOFs). The core thesis is that generative models, through controlled exploration of chemical space, can significantly accelerate the discovery of catalysts with targeted properties such as activity, selectivity, and stability.

Generative Model Architectures for Materials Discovery

Key generative architectures applied in this domain include:

- Variational Autoencoders (VAEs): Encode materials into a continuous latent space where interpolation and perturbation generate new, plausible structures.

- Generative Adversarial Networks (GANs): A generator creates candidate materials while a discriminator evaluates their authenticity, driving the generator toward producing realistic structures.

- Diffusion Models: Iteratively denoise random initial structures to generate samples from the learned data distribution, showing high fidelity.

- Autoregressive Models: Generate materials atom-by-atom or fragment-by-fragment based on learned conditional probabilities.

- Conditional Generators: All the above models can be conditioned on target properties (e.g., high CO₂ adsorption energy), enabling goal-directed discovery.

Case Study 1: Single-Atom Alloys (SAAs)

SAAs consist of isolated reactive metal atoms dispersed on a more inert host metal, offering unique catalytic properties.

3.1 Generative Design Workflow for SAAs

Diagram Title: Generative Design Workflow for Single-Atom Alloys

3.2 Key Research Reagents & Materials for SAA Synthesis & Testing

| Category | Item | Function/Explanation |

|---|---|---|

| Precursor Materials | Host Metal Foils/Powders (Cu, Ag, Au, Pd) | Provide the inert substrate for dopant anchoring. |

| Dopant Metal Salts (e.g., M(NO₃)ₓ, MClₓ; M= Pt, Rh, Co) | Source of single metal atoms for deposition. | |

| Synthesis | Ultra-High Vacuum (UHV) Chamber | Environment for clean surface preparation and controlled deposition (e.g., PVD). |

| Physical Vapor Deposition (PVD) Source | For precise, sub-monolayer deposition of dopant atoms. | |

| Wetness Impregnation Solutions | Liquid-phase method using solvents to deposit precursors on supports. | |

| Characterization | Scanning Tunneling Microscopy (STM) | Direct imaging of single atoms on surfaces. |

| X-ray Absorption Spectroscopy (XAS) | Probes local electronic structure and coordination of single atoms. | |

| Mass Spectrometer (in testing rig) | Quantifies reaction products for activity/selectivity measurement. |

3.3 Quantitative Data: Promising Generatively-Designed SAAs

Table 1: Generated & Validated SAA Catalysts for Key Reactions.

| Generated SAA Candidate | Target Reaction | Predicted Property (DFT) | Experimentally Validated Performance | Key Reference (Example) |

|---|---|---|---|---|

| Pt₁/Cu(111) | Selective Hydrogenation | Low C=C activation barrier | >95% selectivity to alkene | J. Am. Chem. Soc. 2022, 144, ... |

| Rh₁/Ag(111) | CO₂ Hydrogenation to Methanol | Optimal *OCOH binding energy | Methanol STY: 0.5 mol/gₐₜₘ/h | Nat. Catal. 2023, 6, ... |

| Co₁/Pd(111) | Nitrate Electroreduction to Ammonia | Suppressed H₂ evolution side reaction | NH₃ Faradaic Efficiency: 85% | Science Adv. 2023, 9, ... |

| Ni₁/Au(111) | Non-oxidative Methane Coupling | Low C-H activation energy | Ethane yield 10x pure Ni | ACS Catal. 2024, 14, ... |

3.4 Experimental Protocol: Synthesis & Testing of a Pt₁/Cu SAA

Objective: Synthesize and validate a Pt single-atom on Cu host for propylene hydrogenation.

- Substrate Preparation: A Cu(111) single crystal is cleaned in UHV via repeated cycles of Ar⁺ sputtering (1 keV, 15 min) and annealing at 750°C.

- SAA Synthesis: A sub-monolayer amount of Pt is deposited onto the clean, room-temperature Cu surface using an electron-beam evaporator. The sample is subsequently annealed at 300°C to facilitate surface diffusion and alloy formation.

- Characterization (in-situ): STM confirms isolated Pt atoms. XAS at the Pt L₃-edge confirms the absence of Pt-Pt bonds and a coordination environment consistent with Pt in Cu.

- Catalytic Testing: The sample is transferred under UHV to a high-pressure reaction cell. A flow of 10 mbar C₃H₆, 100 mbar H₂, and 950 mbar He is introduced at 150°C. Reaction products are monitored by online mass spectrometry.

Case Study 2: Metal-Organic Frameworks (MOFs)

MOFs are porous, crystalline materials with ultra-high surface areas, tunable via linker and metal node choice.

4.1 Generative Design Workflow for MOFs

Diagram Title: Generative Design Pipeline for Novel MOFs

4.2 Key Research Reagents & Materials for MOF Research

| Category | Item | Function/Explanation |

|---|---|---|

| Building Blocks | Metal Salts (e.g., Zn(NO₃)₂, ZrCl₄, Cu(BF₄)₂) | Source of metal clusters (Secondary Building Units - SBUs). |

| Organic Linkers (Dicarboxylic acids, Tri-/Tetratopic linkers) | Organic struts that connect SBUs to form the porous framework. | |

| Synthesis | Solvothermal Reactor (Teflon-lined autoclave) | High-temperature/pressure vessel for MOF crystallization. |

| Modulators (e.g., Formic Acid, Acetic Acid) | Monodentate ligands to control crystal growth and defect engineering. | |

| Characterization | Powder X-ray Diffractometer (PXRD) | Confirms crystallinity and phase purity against simulated patterns. |

| Gas Sorption Analyzer (N₂, CO₂) | Measures BET surface area, pore volume, and gas uptake isotherms. |

4.3 Quantitative Data: Generatively-Designed MOFs for Gas Separation

Table 2: Generated MOF Candidates for CO₂/N₂ and CO₂/CH₄ Separation.

| Generated MOF (Notation) | Predicted CO₂ Uptake (mmol/g, 1 bar, 298K) | Predicted CO₂/N₂ Selectivity (IAST, 0.2 bar) | Synthesized? | Key Property from Generation |

|---|---|---|---|---|

| Zn-MOF- GenX1 | 5.2 | 180 | Yes | Optimal pore diameter (~0.5 nm) |

| Zr-MOF- GenA5 | 3.8 | 250 | Yes | Functionalized amine site density |

| Mg-MOF- GenB2 | 6.1 | 95 | No (Predicted) | High isosteric heat of adsorption (Qₛₜ) |

| Ca-MOF- GenC7 | 4.5 | 310 | Pending | Polarizable framework with open metal sites |

4.4 Experimental Protocol: Synthesis & Testing of a Generated Zr-MOF

Objective: Synthesize a generatively-designed amine-functionalized Zr-MOF for post-combustion CO₂ capture.

- Computational Generation: A conditional VAE, trained on Zr-based MOFs, generates linker structures with amine groups. Top candidates are filtered for synthetic accessibility and thermal stability.